How optical switching technology is reshaping the way hyperscale data centers grow, adapt, and operate

Modern data centers are among the most complex physical infrastructures ever built. Behind every cloud application, AI workload, or streamed video is a fabric of thousands of interconnected servers and switches — and the architecture that makes all of that connectivity possible is the Clos network.

Originally conceived at Bell Labs in 1952, the Clos design organizes switches into a multi-stage leaf-and-spine hierarchy. Servers connect to leaf switches at the top of each rack; those leaves fan upward to a spine layer of core switches that provides full, non-blocking connectivity across the entire facility. The mathematical elegance of the Clos topology guarantees that any server can reach any other server with no bottlenecks — as long as the physical wiring is correct.

That last qualifier matters more than it might seem.

The Shuffle Box: Cheap, Simple, and Inflexible

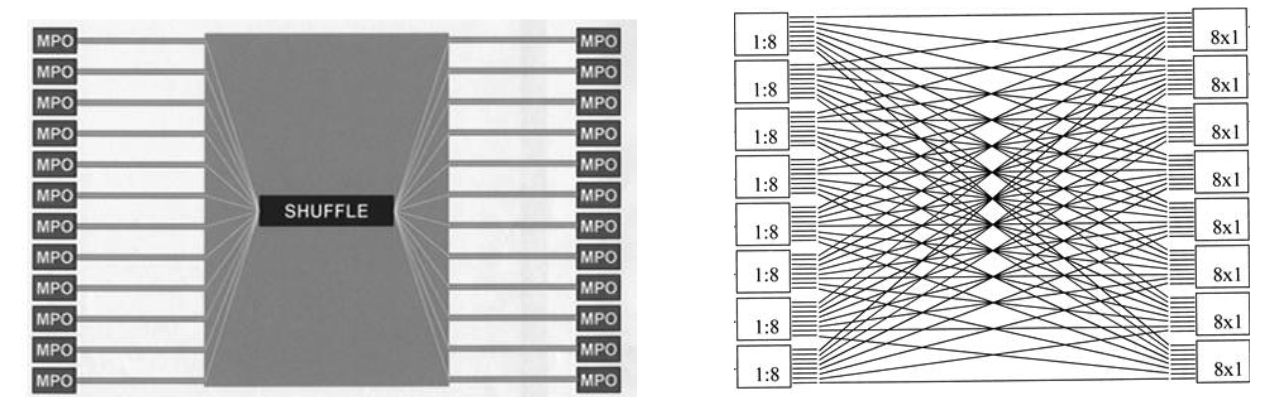

The physical connections between leaf and spine in a Clos network are not simple one-to-one cables. A large facility may have hundreds of aggregation blocks, each requiring precisely distributed fiber connections across every spine block. Managing this at scale requires structured interconnect hardware — which is the role of the shuffle box.

A shuffle box is a passive, pre-terminated fiber panel that takes inputs from many leaf switches and routes them to the appropriate spine switches in a single, compact assembly. Products like Corning's shuffle solutions implement this all-to-all connectivity pattern in hardware, with no active components, minimal insertion loss, and no power draw.

For initial deployment, shuffle boxes are excellent. They are inexpensive, reliable, and straightforward to install. The problem is that they assume the topology will never change.

In practice, data centers are in constant flux. New server racks are added. Spine switches are upgraded to higher-bandwidth generations. Compute capacity is redistributed to accommodate AI workloads or new customer requirements. Every one of these changes requires modifying the physical wiring — which means dispatching technicians to manually re-patch fibers, a process that is slow, costly, and prone to error. A single mispatched fiber can silently degrade performance or cause an outage that takes days to diagnose. At hyperscale, with hundreds of thousands of fiber connections, even small error rates translate into significant operational pain.

The shuffle box, in short, is a perfect solution for a static world. Data centers stopped being static a long time ago.

Google's Radical Answer: Replace the Spine with Optics

Google confronted this problem at a scale few organizations face. With data center networks spanning continents and handling exabytes of traffic, the limitations of a fixed physical topology had real consequences for the efficiency and adaptability of their infrastructure.

Their answer, developed through projects internally known as Gemini and Apollo, was radical: eliminate the electrical spine layer entirely and replace it with optical circuit switches (OCS) built on MEMS (Micro-Electro-Mechanical Systems) mirror technology.

A MEMS optical switch uses arrays of microscopic silicon mirrors — smaller than a millimeter — that can be individually tilted under electrostatic control to steer beams of light from input fibers to output fibers. Because no electrical conversion takes place, the switch is completely data-rate agnostic and consumes power only to hold the mirrors in position. Google's internally developed Palomar OCS unit delivers 136 non-blocking ports at roughly 108 watts — compared to approximately 3,000 watts for an equivalent electronic packet switch. Deployed across Google's fleet, this translated to a reported 40% reduction in spine-layer power and 30% reduction in capital expenditure.

Beyond raw efficiency, the OCS layer made the spine topologically flexible. Google's network team could periodically re-stripe which aggregation blocks connect to which others, optimizing the logical fabric for actual observed traffic patterns rather than theoretical uniformity.

The results are genuinely impressive. But the cost of achieving them was enormous — and not primarily in dollars.

The Google approach required building an entirely new control plane. An optical circuit switch does not forward packets. It creates fixed point-to-point optical circuits that must be managed by software. Google had to extend its Orion SDN platform to orchestrate what became a dynamic direct-connect mesh, continuously monitoring traffic, computing optimal topologies, and reprogramming mirror positions accordingly. By Google's own description, this was a multi-year effort involving many engineering teams across the company.

Additionally, the 136-port limitation of MEMS switches required Google to use bidirectional WDM transceivers and optical circulators to work around port-count constraints — adding components, insertion loss, and complexity. Commercial MEMS products were not available at the required quality and scale, so Google manufactured tens of thousands of custom units internally.

The Google OCS story is a remarkable engineering achievement. It is not, however, a blueprint that most data center operators can follow.

The Telescent Approach: Automation Without Reinvention

Telescent's robotic patch panel system starts from a different premise: rather than replacing the network architecture, automate the physical fiber layer that sits beneath it.

The Telescent system uses a robotic mechanism to physically make and break optical connections within a patch panel under software control. Connections are fully latched mechanical contacts, functionally identical to a manual patch panel, with insertion loss below 0.5 dB and no sensitivity to data rate or protocol. The system integrates with existing SDN management platforms via REST API, meaning topology changes can be initiated programmatically from the same tools already managing the network.

The latest generation scales to over 1,000 non-blocking MPO ports per system, consumes roughly 50 watts in steady state, and includes on-demand OTDR testing for proactive fault detection. A fiber move that would previously require a technician on-site can be reconfigured in minutes from a software interface, with a full audit log of every connection.

Critically, none of the existing network infrastructure changes. Leaf and spine switches continue operating with the same protocols and management tools. The control plane is untouched. The Telescent system simply replaces the static fiber layer with a reconfigurable network — a drop-in augmentation of the physical layer rather than a reinvention of the architecture above it.

Choosing the Right Tool

For operators with Google's engineering resources and infrastructure scale, the MEMS OCS approach offers transformative efficiency gains — if they can absorb the complexity of building and operating a new optical control plane.

For everyone else, the Telescent robotic patch panel provides most of the operational benefits of a reconfigurable physical layer — faster expansion, eliminated patching errors, software-driven topology changes, built-in diagnostics — without requiring a single line of new control plane code.

As AI and high-performance computing push interconnect demands ever higher, the ability to reconfigure data center fiber topology dynamically will become a baseline operational requirement. The question is not whether to automate the physical layer, but how. For most operators, the answer that requires the least reinvention — and delivers the fastest results — is the one that starts where the shuffle box ends